We’ve lengthy acknowledged that developer environments signify a weak

level within the software program provide chain. Builders, by necessity, function with

elevated privileges and quite a lot of freedom, integrating numerous parts

immediately into manufacturing programs. Because of this, any malicious code launched

at this stage can have a broad and vital influence radius significantly

with delicate knowledge and providers.

The introduction of agentic coding assistants (corresponding to Cursor, Windsurf,

Cline, and recently additionally GitHub Copilot) introduces new dimensions to this

panorama. These instruments function not merely as suggestive code mills however

actively work together with developer environments via tool-use and

Reasoning-Motion (ReAct) loops. Coding assistants introduce new parts

and vulnerabilities to the software program provide chain, however can be owned or

compromised themselves in novel and intriguing methods.

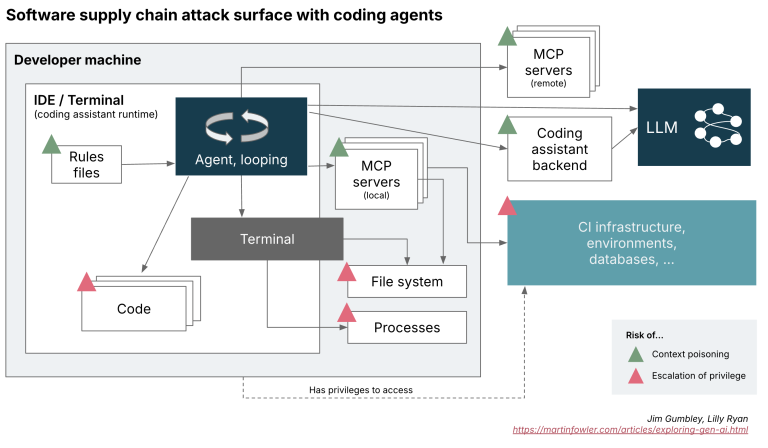

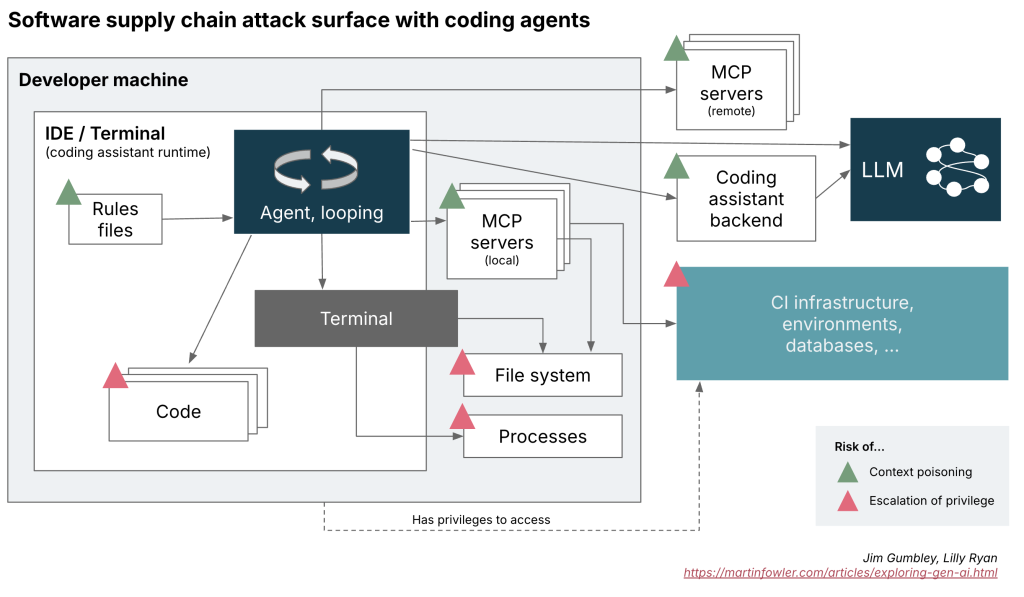

Understanding the Agent Loop Assault Floor

A compromised MCP server, guidelines file or perhaps a code or dependency has the

scope to feed manipulated directions or instructions that the agent executes.

This is not only a minor element – because it will increase the assault floor in contrast

to extra conventional improvement practices, or AI-suggestion based mostly programs.

Determine 1: CD pipeline, emphasizing how

directions and code transfer between these layers. It additionally highlights provide

chain parts the place poisoning can occur, in addition to key parts of

escalation of privilege

Every step of the agent circulate introduces danger:

- Context Poisoning: Malicious responses from exterior instruments or APIs

can set off unintended behaviors throughout the assistant, amplifying malicious

directions via suggestions loops. - Escalation of privilege: A compromised assistant, significantly if

evenly supervised, can execute misleading or dangerous instructions immediately by way of

the assistant’s execution circulate.

This advanced, iterative surroundings creates a fertile floor for delicate

but highly effective assaults, considerably increasing conventional risk fashions.

Conventional monitoring instruments may wrestle to determine malicious

exercise as malicious exercise or delicate knowledge leakage can be more durable to identify

when embedded inside advanced, iterative conversations between parts, as

the instruments are new and unknown and nonetheless growing at a fast tempo.

New weak spots: MCP and Guidelines Information

The introduction of MCP servers and guidelines information create openings for

context poisoning—the place malicious inputs or altered states can silently

propagate via the session, enabling command injection, tampered

outputs, or provide chain assaults by way of compromised code.

Mannequin Context Protocol (MCP) acts as a versatile, modular interface

enabling brokers to attach with exterior instruments and knowledge sources, keep

persistent classes, and share context throughout workflows. Nevertheless, as has

been highlighted

elsewhere,

MCP basically lacks built-in security measures like authentication,

context encryption, or instrument integrity verification by default. This

absence can go away builders uncovered.

Guidelines Information, corresponding to for instance “cursor guidelines”, include predefined

prompts, constraints, and pointers that information the agent’s conduct inside

its loop. They improve stability and reliability by compensating for the

limitations of LLM reasoning—constraining the agent’s attainable actions,

defining error dealing with procedures, and guaranteeing give attention to the duty. Whereas

designed to enhance predictability and effectivity, these guidelines signify

one other layer the place malicious prompts will be injected.

Instrument-calling and privilege escalation

Coding assistants transcend LLM generated code strategies to function

with tool-use by way of perform calling. For instance, given any given coding

process, the assistant might execute instructions, learn and modify information, set up

dependencies, and even name exterior APIs.

The specter of privilege escalation is an rising danger with agentic

coding assistants. Malicious directions, can immediate the assistant

to:

- Execute arbitrary system instructions.

- Modify important configuration or supply code information.

- Introduce or propagate compromised dependencies.

Given the developer’s sometimes elevated native privileges, a

compromised assistant can pivot from the native surroundings to broader

manufacturing programs or the sorts of delicate infrastructure often

accessible by software program builders in organisations.

What are you able to do to safeguard safety with coding brokers?

Coding assistants are fairly new and rising as of when this was

revealed. However some themes in applicable safety measures are beginning

to emerge, and plenty of of them signify very conventional greatest practices.

- Sandboxing and Least Privilege Entry management: Take care to restrict the

privileges granted to coding assistants. Restrictive sandbox environments

can restrict the blast radius. - Provide Chain scrutiny: Fastidiously vet your MCP Servers and Guidelines Information

as important provide chain parts simply as you’d with library and

framework dependencies. - Monitoring and observability: Implement logging and auditing of file

system adjustments initiated by the agent, community calls to MCP servers,

dependency modifications and so on. - Explicitly embody coding assistant workflows and exterior

interactions in your risk

modeling

workouts. Take into account potential assault vectors launched by the

assistant. - Human within the loop: The scope for malicious motion will increase

dramatically whenever you auto settle for adjustments. Don’t turn into over reliant on

the LLM

The ultimate level is especially salient. Speedy code era by AI

can result in approval fatigue, the place builders implicitly belief AI outputs

with out understanding or verifying. Overconfidence in automated processes,

or “vibe coding,” heightens the chance of inadvertently introducing

vulnerabilities. Cultivating vigilance, good coding hygiene, and a tradition

of conscientious custodianship stay actually essential in skilled

software program groups that ship manufacturing software program.

Agentic coding assistants can undeniably present a lift. Nevertheless, the

enhanced capabilities include considerably expanded safety

implications. By clearly understanding these new dangers and diligently

making use of constant, adaptive safety controls, builders and

organizations can higher hope to safeguard in opposition to rising threats within the

evolving AI-assisted software program panorama.