As giant language fashions (LLMs) quickly evolve, so does their promise as highly effective analysis assistants. More and more, they’re not simply answering easy factual questions—they’re tackling “deep analysis” duties, which contain multi-step reasoning, evaluating conflicting info, sourcing information from throughout the online, and synthesizing it right into a coherent output.

This rising functionality is now being marketed below totally different model names by main labs—OpenAI calls it “Deep Analysis”, Anthropic refers to it as “Prolonged Considering”, Google’s Gemini gives “Search + Professional” options, and Perplexity labels theirs “Professional Search” or “Deep Analysis”. However how efficient are these choices in follow? A brand new report by FutureSearch, titled Deep Analysis Bench (DRB): Evaluating Net Analysis Brokers, gives essentially the most rigorous analysis to this point—and the outcomes reveal each spectacular capabilities and demanding shortcomings.

What Is Deep Analysis Bench?

Created by the FutureSearch crew, Deep Analysis Bench is a meticulously constructed benchmark designed to evaluate AI brokers’ efficiency on multi-step, web-based analysis duties. These aren’t easy questions with easy solutions—they replicate the messy, open-ended challenges confronted by analysts, policymakers, and researchers in real-world settings.

The benchmark consists of 89 distinct duties throughout 8 classes similar to:

- Discover Quantity: e.g. “What number of FDA Class II medical machine remembers occurred?”

- Validate Declare: e.g. “Is ChatGPT 10x extra energy-intensive than Google Search?”

- Compile Dataset: e.g. “Job developments for US software program builders from 2019–2023”

Every activity kind is fastidiously structured with human-verified solutions and evaluated utilizing a frozen dataset of scraped net pages, often called RetroSearch. This ensures consistency throughout mannequin evaluations, avoiding the fluctuating state of the stay net.

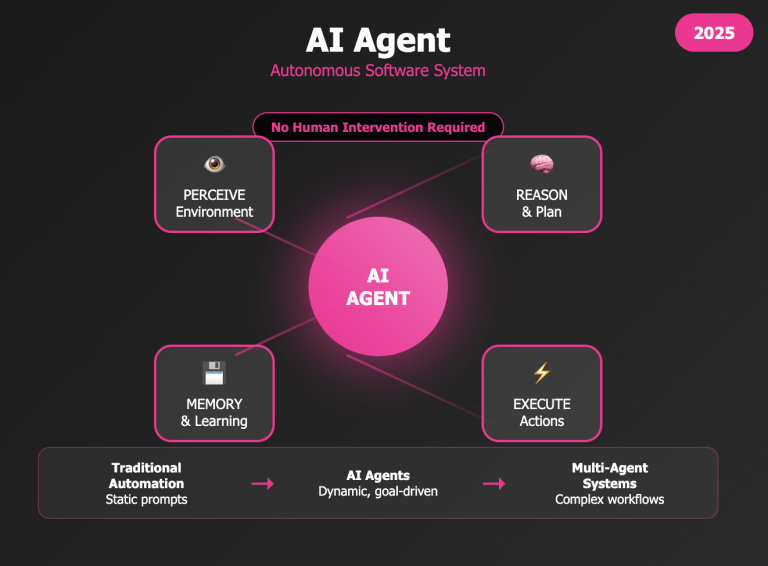

The Agent Structure: ReAct and RetroSearch

On the coronary heart of Deep Analysis Bench lies the ReAct structure, brief for “Motive + Act.” This technique mimics how a human researcher may deal with an issue—by pondering by means of the duty, taking an motion like performing an internet search, observing the outcomes, after which deciding whether or not to iterate or conclude.

Whereas earlier fashions comply with this loop explicitly, newer “pondering” fashions usually streamline the method, embedding reasoning extra fluidly into their actions. To make sure consistency throughout evaluations, DRB introduces RetroSearch—a custom-built, static model of the online. Relatively than counting on the stay web, which always modifications, brokers faucet right into a curated archive of net pages scraped utilizing instruments like Serper, Playwright, and ScraperAPI. The size is spectacular: for high-complexity duties similar to “Collect Proof,” RetroSearch can present entry to over 189,000 pages, all frozen in time, making certain a good and replicable testing setting.

Which AI Brokers Carry out Greatest?

Amongst all of the contenders, OpenAI’s o3 emerged as the highest performer, scoring 0.51 out of a attainable 1.0 on the Deep Analysis Bench. Whereas that may sound modest, it’s necessary to know the benchmark’s issue: as a consequence of ambiguity in activity definitions and scoring, even a flawless agent would doubtless prime out round 0.8—what researchers name the “noise ceiling.” In different phrases, even the most effective fashions right this moment nonetheless fall wanting well-informed, methodical human researchers.

Nonetheless, the leaderboard gives revealing insights. o3 not solely led the pack however did so with pace and consistency, exhibiting sturdy efficiency throughout practically all activity varieties. Claude 3.7 Sonnet from Anthropic adopted intently, demonstrating versatility in each its “pondering” and “non-thinking” modes. Gemini 2.5 Professional, Google’s flagship mannequin, stood out for its capacity to deal with duties requiring structured planning and step-by-step reasoning. In the meantime, the open-weight DeepSeek-R1 delivered a nice shock—protecting tempo with GPT-4 Turbo and narrowing the efficiency hole between open and closed fashions.

Throughout the board, a transparent sample emerged: newer, “thinking-enabled” fashions persistently outperformed their earlier counterparts, and closed-source fashions maintained a notable edge over open-weight options.

The place Do Brokers Battle?

Studying by means of the failure patterns highlighted within the Deep Analysis Bench report felt surprisingly acquainted. Probably the most irritating elements I’ve personally encountered—particularly throughout lengthy analysis or content material creation classes—is when an AI agent merely forgets what we have been doing. Because the context window stretches, the mannequin usually begins to lose the thread: key particulars fade, objectives get muddled, and instantly, the responses really feel disjointed or aimless. Sooner or later, I’ve realized it’s usually higher to chop losses and begin from scratch, even when it means throwing away every part that’s been generated up to now.

That form of forgetfulness isn’t simply anecdotal—it’s essentially the most vital predictor of failure within the Deep Analysis Bench analysis. However it’s not the one recurring subject. The report additionally highlights how some fashions fall into repetitive software use, operating the identical search time and again as if caught in a loop. Others present poor question crafting, lazily keyword-matching as a substitute of pondering critically about the right way to search successfully. And much too usually, brokers fall sufferer to untimely conclusions—delivering a half-formed reply that technically checks the field however falls wanting actual perception.

Even among the many prime fashions, the variations are stark. GPT-4 Turbo, for instance, confirmed a notable tendency to overlook prior steps, whereas DeepSeek-R1 was extra more likely to hallucinate or invent plausible-sounding—however incorrect—info. Throughout the board, fashions continuously did not cross-check sources or validate findings earlier than finalizing their output. For anybody who’s relied on AI for severe work, these points will really feel all too acquainted—they usually underscore how far we nonetheless need to go in constructing brokers that may actually assume and analysis like people.

What About Reminiscence-Primarily based Efficiency?

Curiously, Deep Analysis Bench additionally evaluated what it calls “toolless” brokers—language fashions working with none entry to exterior instruments, similar to net search or doc retrieval. These brokers rely totally on their inside coaching information and reminiscence, producing solutions primarily based solely on what they’ve beforehand realized throughout coaching. In follow, this implies they will’t look something up or confirm info—they’re guessing primarily based on what they “keep in mind.”

Surprisingly, these toolless brokers carried out virtually in addition to full analysis brokers on sure duties. For instance, on the Validate Declare activity—the place the purpose is to evaluate the plausibility of a press release—they scored 0.61, practically matching the 0.62 common of tool-enabled brokers. This implies that fashions like o3 and Claude have sturdy inside priors and might usually acknowledge the truthfulness of widespread claims with no need to go looking the online.

However on extra demanding duties—like Derive Quantity, which requires piecing collectively a number of values from numerous sources, or Collect Proof, which is dependent upon discovering and evaluating numerous info in context—these toolless fashions fully fell aside. With out contemporary info or real-time lookup capabilities, they merely lacked the means to supply correct or complete solutions.

This distinction highlights an necessary nuance: whereas right this moment’s LLMs can simulate “understanding” loads, deep analysis relies upon not simply on recall, however on reasoning with up-to-date, verifiable info—one thing solely tool-augmented brokers can actually ship.

Remaining Ideas

The DRB report makes one factor clear: whereas right this moment’s greatest AI brokers can outpace common people on narrowly outlined duties, they nonetheless lag behind expert generalist researchers—particularly in relation to planning strategically, adapting mid-process, and reasoning with nuance.

This hole turns into particularly apparent throughout lengthy or complicated classes—one thing I’ve skilled firsthand, the place an agent regularly loses monitor of the duty’s goal, resulting in a irritating breakdown in coherence and utility.

What makes Deep Analysis Bench so beneficial is that it doesn’t simply take a look at surface-level information—it probes the intersection of software use, reminiscence, reasoning, and adaptation, providing a more in-depth analog to real-world analysis than benchmarks like MMLU or GSM8k.

As LLMs proceed to combine into severe information work, FutureSearch instruments like DRB will likely be important for assessing not simply what these methods know, however how nicely they really work.