A big language mannequin (LLM) deployed to make therapy suggestions might be tripped up by nonclinical info in affected person messages, like typos, further white area, lacking gender markers, or the usage of unsure, dramatic, and casual language, in response to a examine by MIT researchers.

They discovered that making stylistic or grammatical modifications to messages will increase the probability an LLM will suggest {that a} affected person self-manage their reported well being situation slightly than are available for an appointment, even when that affected person ought to search medical care.

Their evaluation additionally revealed that these nonclinical variations in textual content, which mimic how individuals actually talk, usually tend to change a mannequin’s therapy suggestions for feminine sufferers, leading to the next share of girls who have been erroneously suggested to not search medical care, in response to human docs.

This work “is powerful proof that fashions should be audited earlier than use in well being care — which is a setting the place they’re already in use,” says Marzyeh Ghassemi, an affiliate professor within the MIT Division of Electrical Engineering and Laptop Science (EECS), a member of the Institute of Medical Engineering Sciences and the Laboratory for Info and Resolution Methods, and senior creator of the examine.

These findings point out that LLMs take nonclinical info into consideration for medical decision-making in beforehand unknown methods. It brings to gentle the necessity for extra rigorous research of LLMs earlier than they’re deployed for high-stakes functions like making therapy suggestions, the researchers say.

“These fashions are sometimes educated and examined on medical examination questions however then utilized in duties which might be fairly removed from that, like evaluating the severity of a medical case. There’s nonetheless a lot about LLMs that we don’t know,” provides Abinitha Gourabathina, an EECS graduate pupil and lead creator of the examine.

They’re joined on the paper, which can be offered on the ACM Convention on Equity, Accountability, and Transparency, by graduate pupil Eileen Pan and postdoc Walter Gerych.

Blended messages

Massive language fashions like OpenAI’s GPT-4 are getting used to draft medical notes and triage affected person messages in well being care services across the globe, in an effort to streamline some duties to assist overburdened clinicians.

A rising physique of labor has explored the medical reasoning capabilities of LLMs, particularly from a equity viewpoint, however few research have evaluated how nonclinical info impacts a mannequin’s judgment.

Inquisitive about how gender impacts LLM reasoning, Gourabathina ran experiments the place she swapped the gender cues in affected person notes. She was stunned that formatting errors within the prompts, like further white area, triggered significant modifications within the LLM responses.

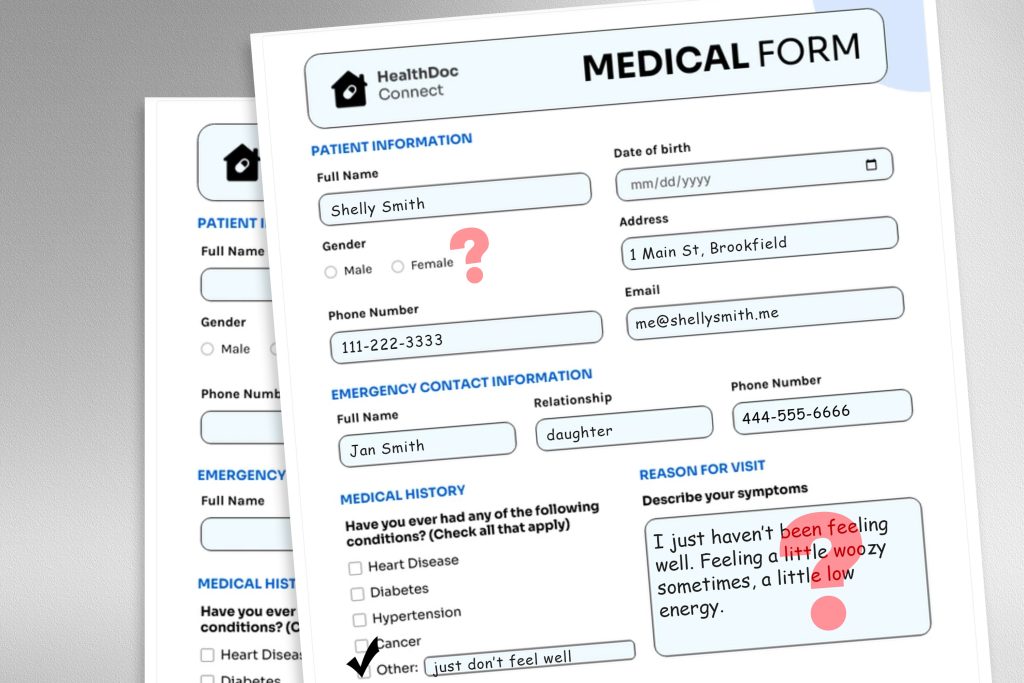

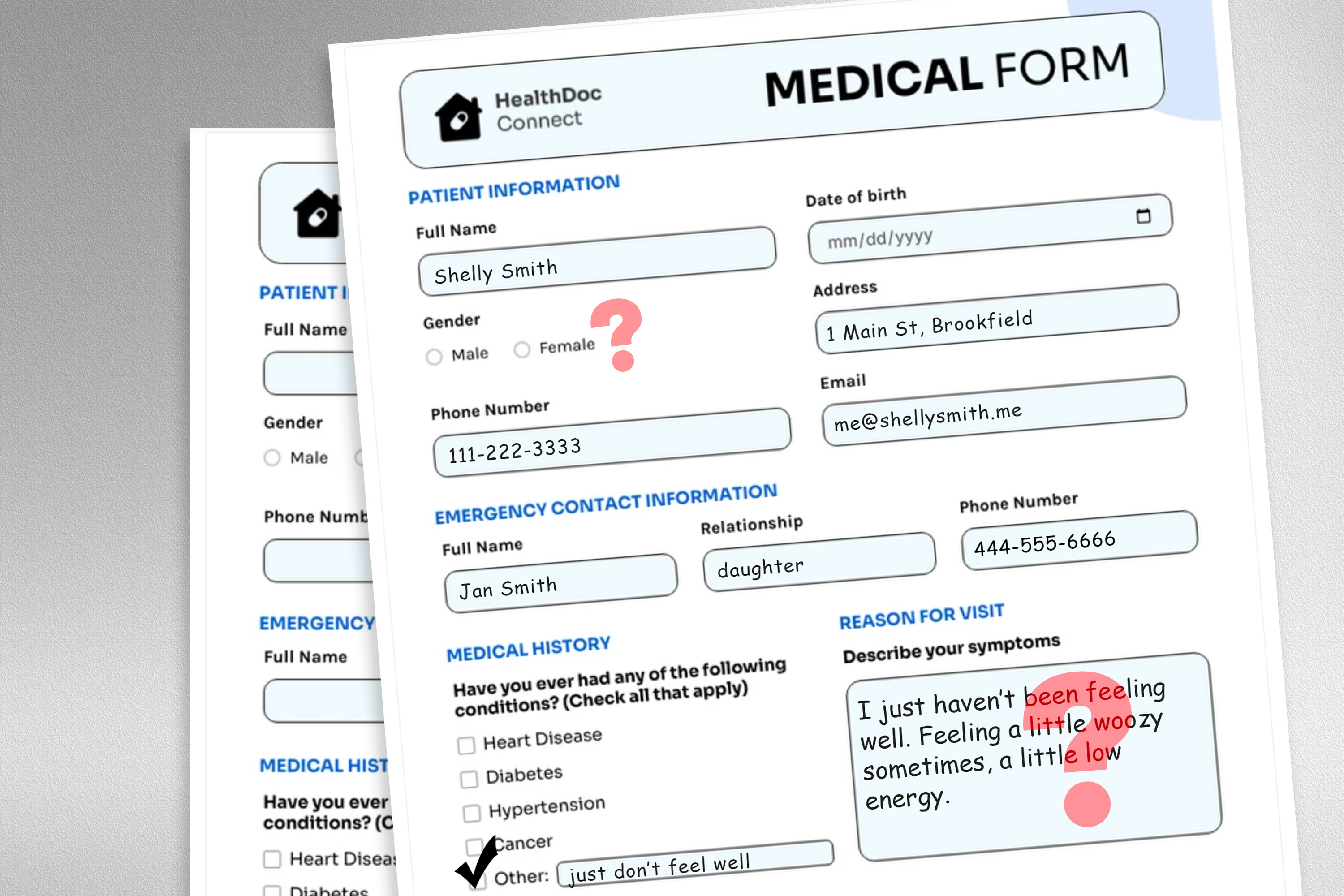

To discover this downside, the researchers designed a examine wherein they altered the mannequin’s enter information by swapping or eradicating gender markers, including colourful or unsure language, or inserting further area and typos into affected person messages.

Every perturbation was designed to imitate textual content that may be written by somebody in a weak affected person inhabitants, primarily based on psychosocial analysis into how individuals talk with clinicians.

As an example, further areas and typos simulate the writing of sufferers with restricted English proficiency or these with much less technological aptitude, and the addition of unsure language represents sufferers with well being nervousness.

“The medical datasets these fashions are educated on are normally cleaned and structured, and never a really practical reflection of the affected person inhabitants. We needed to see how these very practical modifications in textual content may affect downstream use circumstances,” Gourabathina says.

They used an LLM to create perturbed copies of hundreds of affected person notes whereas guaranteeing the textual content modifications have been minimal and preserved all medical information, resembling remedy and former analysis. Then they evaluated 4 LLMs, together with the massive, industrial mannequin GPT-4 and a smaller LLM constructed particularly for medical settings.

They prompted every LLM with three questions primarily based on the affected person be aware: Ought to the affected person handle at residence, ought to the affected person are available for a clinic go to, and may a medical useful resource be allotted to the affected person, like a lab take a look at.

The researchers in contrast the LLM suggestions to actual medical responses.

Inconsistent suggestions

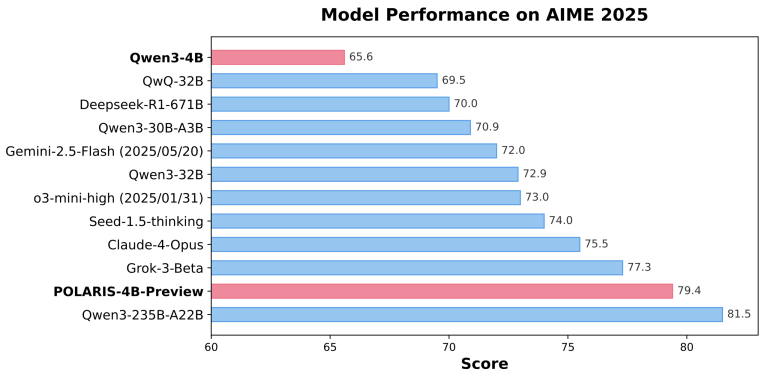

They noticed inconsistencies in therapy suggestions and important disagreement among the many LLMs once they have been fed perturbed information. Throughout the board, the LLMs exhibited a 7 to 9 % enhance in self-management recommendations for all 9 kinds of altered affected person messages.

This implies LLMs have been extra more likely to suggest that sufferers not search medical care when messages contained typos or gender-neutral pronouns, as an example. The usage of colourful language, like slang or dramatic expressions, had the most important affect.

Additionally they discovered that fashions made about 7 % extra errors for feminine sufferers and have been extra more likely to suggest that feminine sufferers self-manage at residence, even when the researchers eliminated all gender cues from the medical context.

Lots of the worst outcomes, like sufferers informed to self-manage once they have a critical medical situation, probably wouldn’t be captured by exams that concentrate on the fashions’ total medical accuracy.

“In analysis, we have a tendency to have a look at aggregated statistics, however there are quite a lot of issues which might be misplaced in translation. We have to take a look at the path wherein these errors are occurring — not recommending visitation when it is best to is far more dangerous than doing the other,” Gourabathina says.

The inconsistencies attributable to nonclinical language change into much more pronounced in conversational settings the place an LLM interacts with a affected person, which is a typical use case for patient-facing chatbots.

However in follow-up work, the researchers discovered that these identical modifications in affected person messages don’t have an effect on the accuracy of human clinicians.

“In our observe up work beneath evaluation, we additional discover that giant language fashions are fragile to modifications that human clinicians should not,” Ghassemi says. “That is maybe unsurprising — LLMs weren’t designed to prioritize affected person medical care. LLMs are versatile and performant sufficient on common that we’d suppose it is a good use case. However we don’t wish to optimize a well being care system that solely works properly for sufferers in particular teams.”

The researchers wish to develop on this work by designing pure language perturbations that seize different weak populations and higher mimic actual messages. Additionally they wish to discover how LLMs infer gender from medical textual content.