When individuals ask about the way forward for Generative AI in coding, what they

usually need to know is: Will there be some extent the place Giant Language Fashions can

autonomously generate and keep a working software program software? Will we

be capable to simply creator a pure language specification, hit “generate” and

stroll away, and AI will be capable to do all of the coding, testing and deployment

for us?

To study extra about the place we’re in the present day, and what must be solved

on a path from in the present day to a future like that, we ran some experiments to see

how far we might push the autonomy of Generative AI code era with a

easy software, in the present day. The usual and the standard lens utilized to

the outcomes is the use case of creating digital merchandise, enterprise

software software program, the kind of software program that I have been constructing most in

my profession. For instance, I’ve labored quite a bit on massive retail and listings

web sites, techniques that sometimes present RESTful APIs, retailer knowledge into

relational databases, ship occasions to one another. Danger assessments and

definitions of what good code seems to be like might be completely different for different

conditions.

The primary purpose was to find out about AI’s capabilities. A Spring Boot

software just like the one in our setup can most likely be written in 1-2 hours

by an skilled developer with a strong IDE, and we do not even bootstrap

issues that a lot in actual life. Nonetheless, it was an attention-grabbing check case to

discover our fundamental query: How may we push autonomy and repeatability of

AI code era?

For the overwhelming majority of our iterations, we used Claude-Sonnet fashions

(both 3.7 or 4). These in our expertise persistently present the very best

coding capabilities of the accessible LLMs, so we discovered them probably the most

appropriate for this experiment.

The methods

We employed a set of “methods” one after the other to see if and the way they will

enhance the reliability of the era and high quality of the generated

code. The entire methods had been used to enhance the chance that the

setup generates a working, examined and prime quality codebase with out human

intervention. They had been all makes an attempt to introduce extra management into the

era course of.

Alternative of the tech stack

We selected a easy “CRUD” API backend (Create, Learn, Replace, Delete)

carried out in Spring Boot because the purpose of the era.

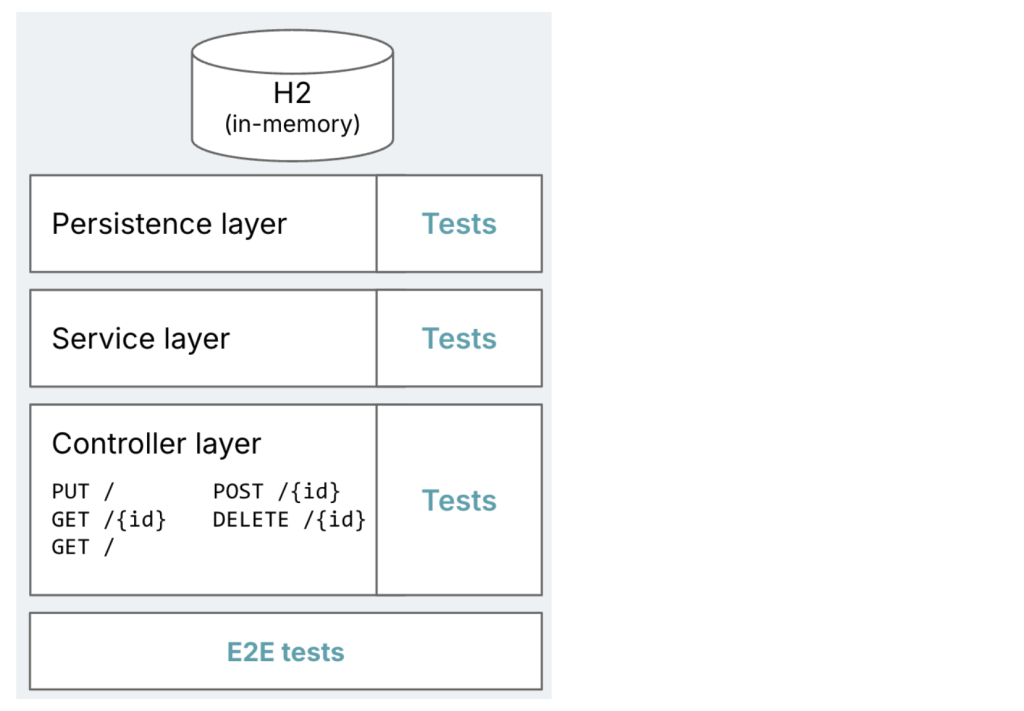

Determine 1: Diagram of the meant

goal software, with typical Spring Boot layers of persistence,

providers, and controllers. Highlights how every layer ought to have exams,

plus a set of E2E exams.

As talked about earlier than, constructing an software like it is a fairly

easy use case. The concept was to begin quite simple, after which if that

works, crank up the complexity or number of necessities.

How can this improve the success price?

The selection of Spring Boot because the goal stack was in itself our first

technique of accelerating the probabilities of success.

- A frequent tech stack that needs to be fairly prevalent within the coaching

knowledge - A runtime framework that may do lots of the heavy lifting, which implies

much less code to generate for AI - An software topology that has very clearly established patterns:

Controller -> Service -> Repository -> Entity, which implies that it’s

comparatively straightforward to present AI a set of patterns to observe

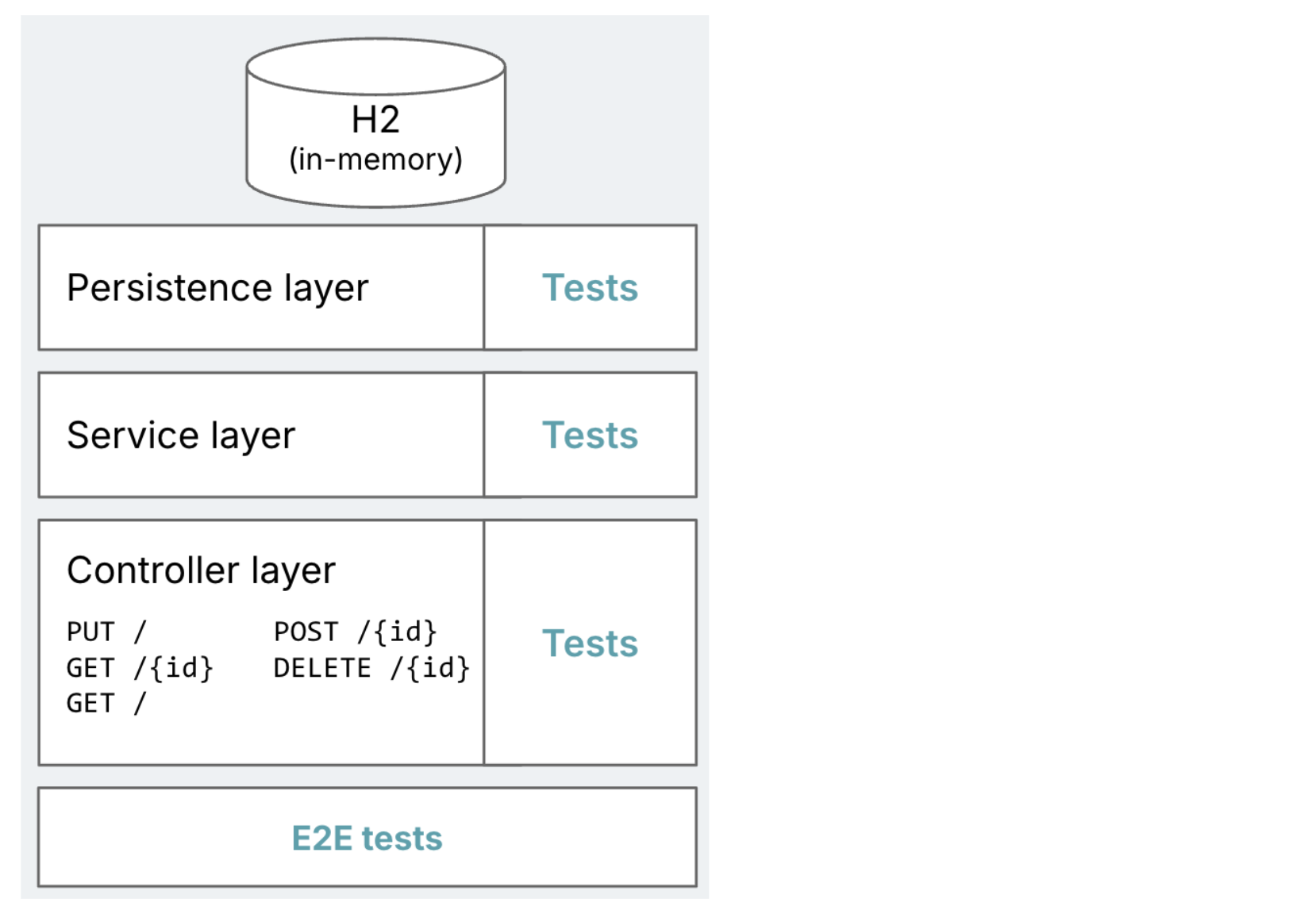

A number of brokers

We cut up the era course of into a number of brokers. “Agent” right here

implies that every of those steps is dealt with by a separate LLM session, with

a particular position and instruction set. We didn’t make another

configurations per step for now, e.g. we didn’t use completely different fashions for

completely different steps.

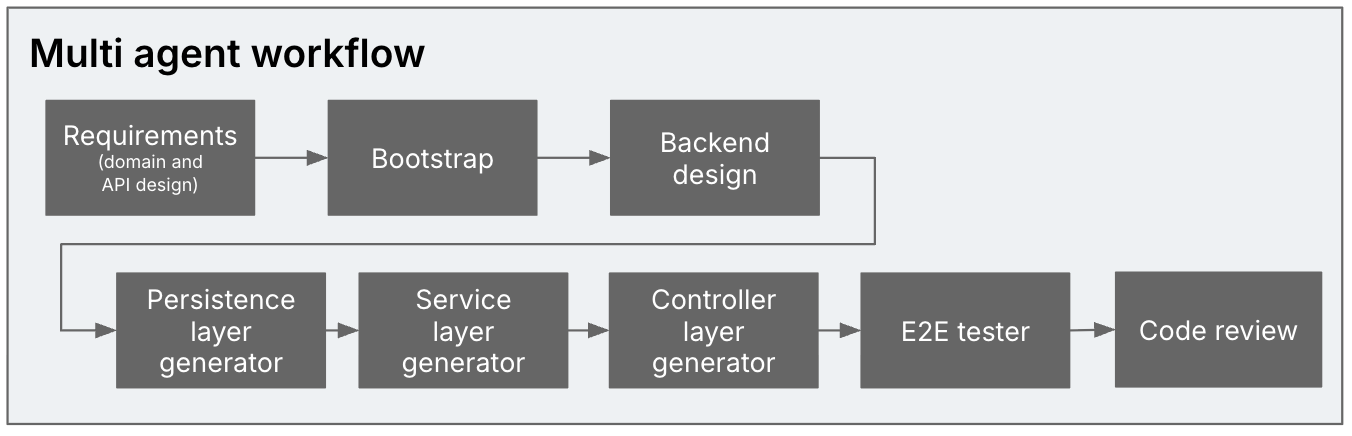

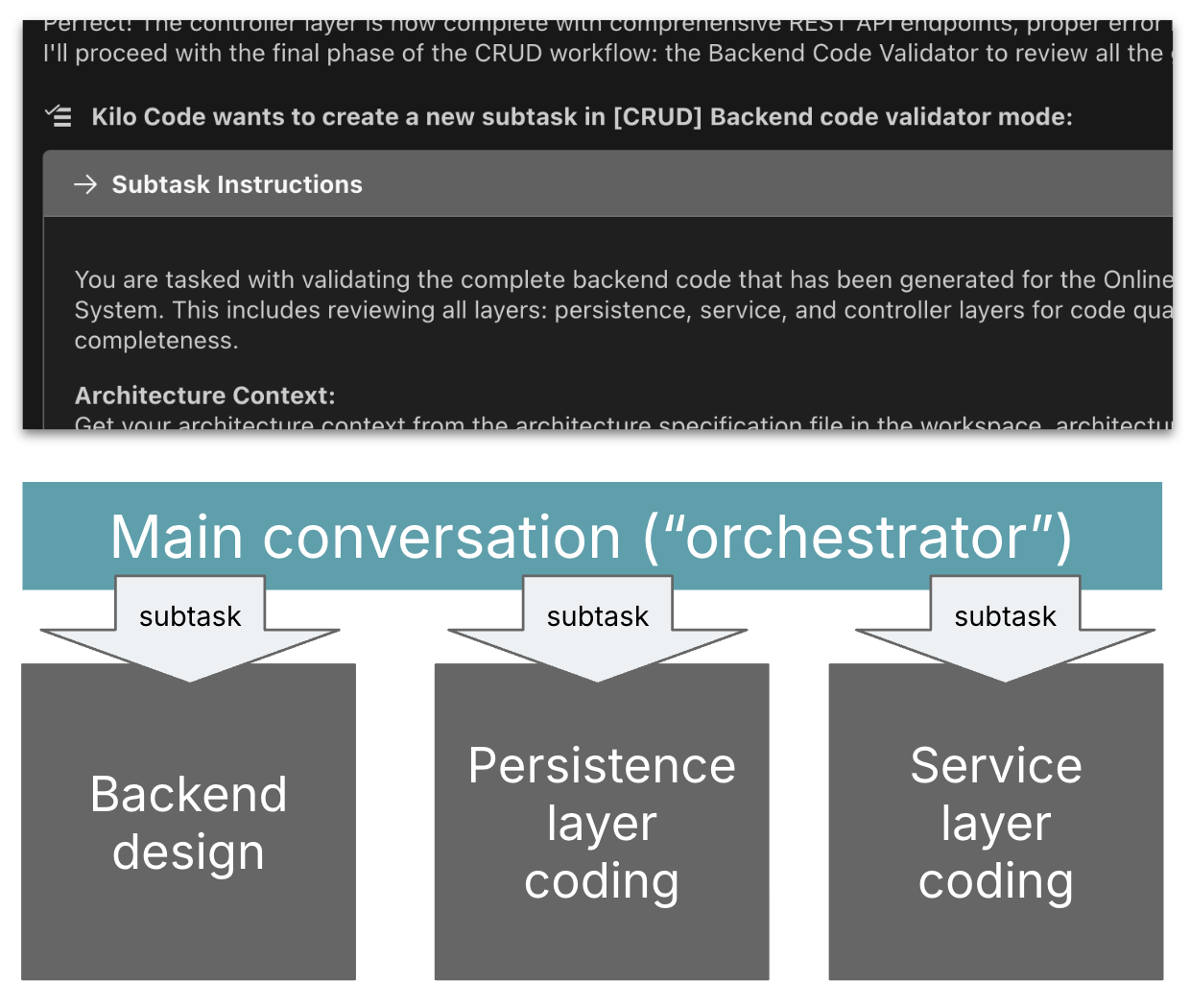

Determine 2: A number of brokers within the era

course of: Necessities analyst -> Bootstrapper -> Backend designer ->

Persistence layer generator -> Service layer generator -> Controller layer

generator -> E2E tester -> Code reviewer

To not taint the outcomes with subpar coding talents, we used a setup

on high of an present coding assistant that has a bunch of coding-specific

talents already: It might probably learn and search a codebase, react to linting

errors, retry when it fails, and so forth. We would have liked one that may orchestrate

subtasks with their very own context window. The one one we had been conscious of on the time

that may do that’s Roo Code, and

its fork Kilo Code. We used the latter. This gave

us a facsimile of a multi-agent coding setup with out having to construct

one thing from scratch.

Determine 3: Subtasking setup in Kilo: An

orchestrator session delegates to subtask periods

With a rigorously curated allow-list of terminal instructions, a human solely

must hit “approve” right here and there. We let it run within the background and

checked on it every so often, and Kilo gave us a sound notification

at any time when it wanted enter or an approval.

How can this improve the success price?

Though technically the context window sizes of LLMs are

rising, LLM era outcomes nonetheless turn out to be extra hit or miss the

longer a session turns into. Many coding assistants now provide the flexibility to

compress the context intermittently, however a standard recommendation to coders utilizing

brokers continues to be that they need to restart coding periods as continuously as

attainable.

Secondly, it’s a very established prompting apply is to assign

roles and views to LLMs to extend the standard of their outcomes.

We might make the most of that as nicely with this separation into a number of

agentic steps.

Stack-specific over basic goal

As you possibly can possibly already inform from the workflow and its separation

into the everyday controller, service and persistence layers, we did not

shrink back from utilizing methods and prompts particular to the Spring goal

stack.

How can this improve the success price?

One of many key issues individuals are enthusiastic about with Generative AI is

that it may be a basic goal code generator that may flip pure

language specs into code in any stack. Nonetheless, simply telling

an LLM to “write a Spring Boot software” is just not going to yield the

prime quality and contextual code you want in a real-world digital

product state of affairs with out additional directions (extra on that within the

outcomes part). So we needed to see how stack-specific our setup would

must turn out to be to make the outcomes prime quality and repeatable.

Use of deterministic scripts

For bootstrapping the appliance, we used a shell script quite than

having the LLM do that. In spite of everything, there’s a CLI to create an as much as

date, idiomatically structured Spring Boot software, so why would we

need AI to do that?

The bootstrapping step was the one one the place we used this method,

nevertheless it’s price remembering that an agentic workflow like this by no

means must be totally as much as AI, we are able to combine and match with “correct

software program” wherever acceptable.

Code examples in prompts

Utilizing instance code snippets for the assorted patterns (Entity,

Repository, …) turned out to be the best technique to get AI

to generate the kind of code we needed.

How can this improve the success price?

Why do we’d like these code samples, why does it matter for our digital

merchandise and enterprise software software program lens?

The best instance from our experiment is using libraries. For

instance, if not particularly prompted, we discovered that the LLM continuously

makes use of javax.persistence, which has been outmoded by

jakarta.persistence. Extrapolate that instance to a big engineering

group that has a particular set of coding patterns, libraries, and

idioms that they need to use persistently throughout all their codebases.

Pattern code snippets are a really efficient solution to talk these

patterns to the LLM, and be sure that it makes use of them within the generated

code.

Additionally think about the use case of AI sustaining this software over time,

and never simply creating its first model. We might need it to be prepared to make use of

a brand new framework or new framework model as and when it turns into related, with out

having to attend for it to be dominant within the mannequin’s coaching knowledge. We might

want a approach for the AI tooling to reliably choose up on these library nuances.

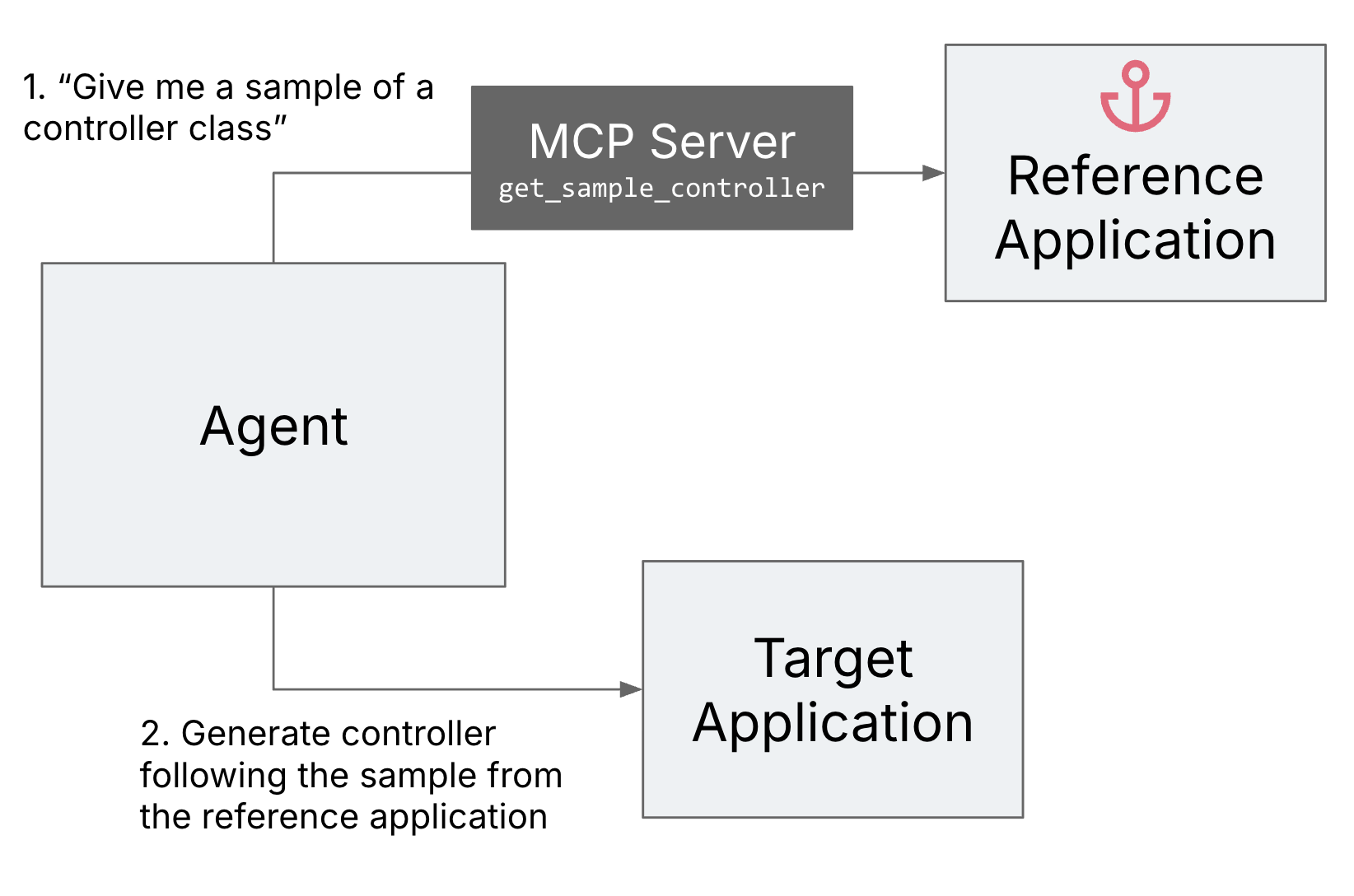

Reference software as an anchor

It turned out that sustaining the code examples within the pure

language prompts is kind of tedious. While you iterate on them, you do not

get fast suggestions to see in case your pattern would truly compile, and

you additionally must ensure that all of the separate samples you present are

according to one another.

To enhance the developer expertise of the developer implementing the

agentic workflow, we arrange a reference software and an MCP (Mannequin

Context Protocol) server that may present the pattern code to the agent

from this reference software. This fashion we might simply ensure that

the samples compile and are according to one another.

Determine 4: Reference software as an

anchor

Generate-review loops

We launched a evaluate agent to double test AI’s work in opposition to the

unique prompts. This added a further security internet to catch errors

and make sure the generated code adhered to the necessities and

directions.

How can this improve the success price?

In an LLM’s first era, it usually doesn’t observe all of the

directions accurately, particularly when there are lots of them.

Nonetheless, when requested to evaluate what it created, and the way it matches the

unique directions, it’s often fairly good at reasoning concerning the

constancy of its work, and may repair lots of its personal errors.

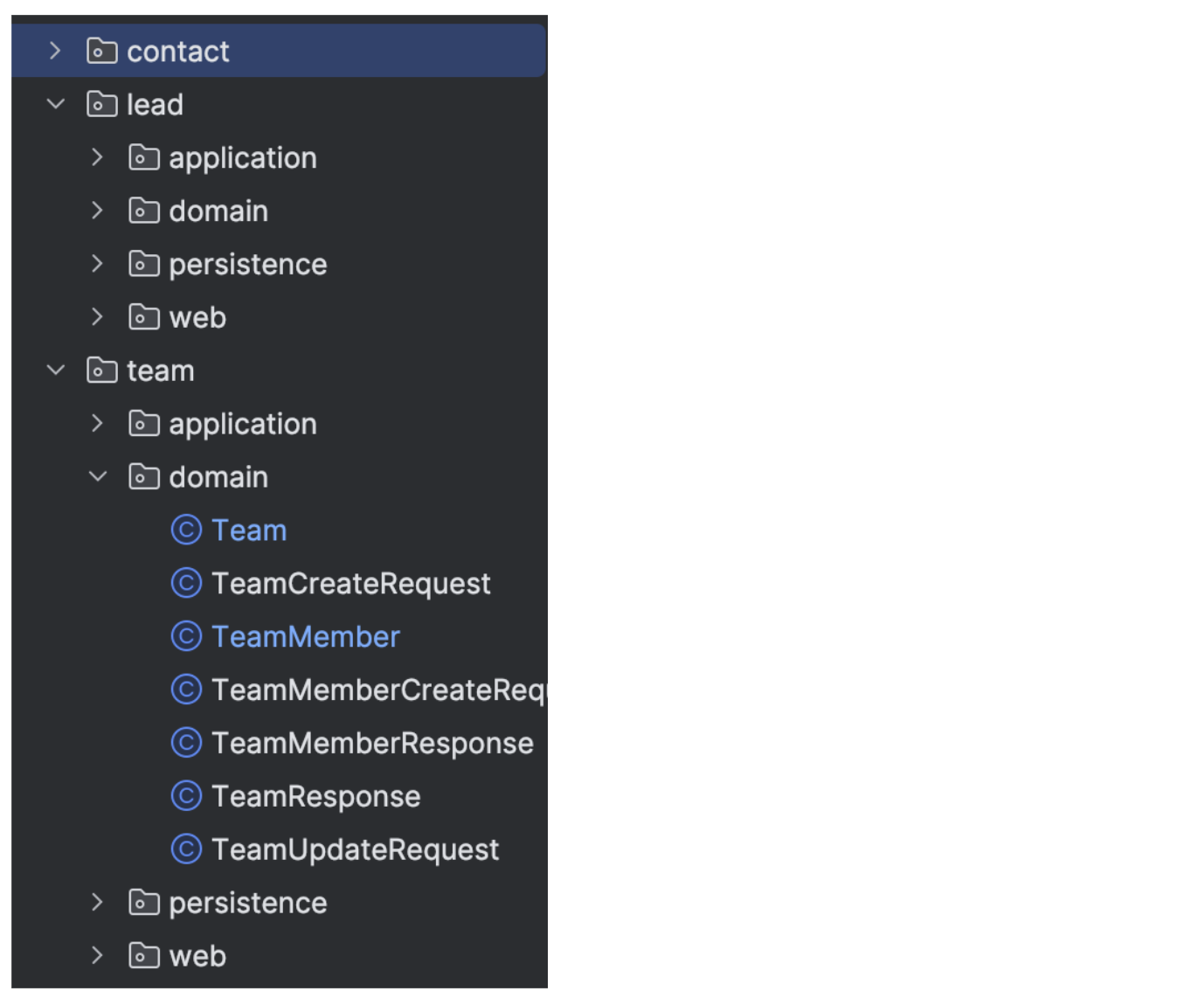

Codebase modularization

We requested the AI to divide the area into aggregates, and use these

to find out the bundle construction.

Determine 5: Pattern of modularised

bundle construction

That is truly an instance of one thing that was laborious to get AI to

do with out human oversight and correction. It’s a idea that can be

laborious for people to do nicely.

Here’s a immediate excerpt the place we ask AI to

group entities into aggregates throughout the necessities evaluation

step:

An mixture is a cluster of area objects that may be handled as a

single unit, it should keep internally constant after every enterprise

operation.

For every mixture:

- Identify root and contained entities

- Clarify why this mixture is sized the best way it's

(transaction dimension, concurrency, learn/write patterns).

We did not spend a lot effort on tuning these directions and so they can most likely be improved,

however generally, it isn’t trivial to get AI to use an idea like this nicely.

How can this improve the success price?

There are various advantages of code modularisation that

enhance the standard of the runtime, like efficiency of queries, or

transactionality issues. Nevertheless it additionally has many advantages for

maintainability and extensibility – for each people and AI:

- Good modularisation limits the variety of locations the place a change must be

made, which implies much less context for the LLM to remember throughout a change. - You may re-apply an agentic workflow like this one to at least one module at a time,

limiting token utilization, and lowering the scale of a change set. - With the ability to clearly restrict an AI activity’s context to particular code modules

opens up prospects to “freeze” all others, to cut back the prospect of

unintended modifications. (We didn’t do that right here although.)

Outcomes

Spherical 1: 3-5 entities

For many of our iterations, we used domains like “Easy product catalog”

or “E-book monitoring in a library”, and edited down the area design performed by the

necessities evaluation part to a most of 3-5 entities. The one logic in

the necessities had been a couple of validations, aside from that we simply requested for

easy CRUD APIs.

We ran about 15 iterations of this class, with rising sophistication

of the prompts and setup. An iteration for the complete workflow often took

about 25-Half-hour, and value $2-3 of Anthropic tokens ($4-5 with

“pondering” enabled).

Finally, this setup might repeatedly generate a working software that

adopted most of our specs and conventions with hardly any human

intervention. It all the time bumped into some errors, however might continuously repair its

personal errors itself.

Spherical 2: Pre-existing schema with 10 entities

To crank up the scale and complexity, we pointed the workflow at a

pared down present schema for a Buyer Relationship Administration

software (~10 entities), and likewise switched from in-memory H2 to

Postgres. Like in spherical 1, there have been a couple of validation and enterprise

guidelines, however no logic past that, and we requested it to generate CRUD API

endpoints.

The workflow ran for 4–5 hours, with fairly a couple of human

interventions in between.

As a second step, we supplied it with the complete set of fields for the

fundamental entity, requested it to increase it from 15 to 50 fields. This ran

one other 1 hour.

A recreation of whac-a-mole

General, we might undoubtedly see an enchancment as we had been making use of

extra of the methods. However in the end, even on this fairly managed

setup with very particular prompting and a comparatively easy goal

software, we nonetheless discovered points within the generated code on a regular basis.

It is a bit like whac-a-mole, each time you run the workflow, one thing

else occurs, and also you add one thing else to the prompts or the workflow

to attempt to mitigate that.

These had been among the patterns which are notably problematic for

an actual world enterprise software or digital product:

Overeagerness

We continuously bought further endpoints and options that we didn’t

ask for within the necessities. We even noticed it add enterprise logic that we

did not ask for, e.g. when it got here throughout a website time period that it knew how

to calculate. (“Professional-rated income, I do know what that’s! Let me add the

calculation for that.”)

Attainable mitigation

Might be reigned in to an extent with the prompts, and repeatedly

reminding AI that we ONLY need what’s specified. The reviewer agent can

additionally assist catch among the extra code (although we have seen the reviewer

delete an excessive amount of code in its try to repair that). However this nonetheless

occurred in some form or type in nearly all of our iterations. We made

one try at reducing the temperature to see if that may assist, however

because it was just one try in an earlier model of the setup, we will not

conclude a lot from the outcomes.

Gaps within the necessities might be crammed with assumptions

A precedence: String subject in an entity was assumed by AI to have the

worth set “1”, “2”, “3”. After we launched the enlargement to extra fields

later, although we did not ask for any modifications to the precedence

subject, it modified its assumptions to “low”, “medium”, “excessive”. Aside from

the truth that it might be quite a bit higher to have launched an Enum

right here, so long as the assumptions keep within the exams solely, it may not be

a giant challenge but. However this might be fairly problematic and have heavy

impression on a manufacturing database if it might occur to a default

worth.

Attainable mitigation

We would by some means must ensure that the necessities we give are as

full and detailed as attainable, and embrace a price set on this case.

However traditionally, we’ve got not been nice at that… We have now seen some AI

be very useful in serving to people discover gaps of their necessities, however

the danger of incomplete or incoherent necessities all the time stays. And

the purpose right here was to check the boundaries of AI autonomy, in order that

autonomy is certainly restricted at this necessities step.

Brute drive fixes

“[There is a ] lazy-loaded relationship that’s inflicting JSON

serialization issues. Let me repair this by including @JsonIgnore to the

subject”. Related issues have additionally occurred to me a number of instances in

agent-assisted coding periods, from “the construct is working out of

reminiscence, let’s simply allocate extra reminiscence” to “I am unable to get the check to

work proper now, let’s skip it for now and transfer on to the following activity”.

Attainable mitigation

We haven’t any concept forestall this.

Declaring success regardless of pink exams

AI continuously claimed the construct and exams had been profitable and moved

on to the following step, although they weren’t, and although our

directions explicitly said that the duty is just not performed if construct or

exams are failing.

Attainable mitigation

This is perhaps easier to repair than the opposite issues talked about right here,

by a extra refined agent workflow setup that has deterministic

checkpoints and doesn’t permit the workflow to proceed until exams are

inexperienced. Nonetheless, expertise from agentic workflows in enterprise course of

automation have already proven that LLMs discover methods to get round

that. Within the case of code era,

I might think about they may nonetheless delete or skip exams to get past that

checkpoint.

Static code evaluation points

We ran SonarQube static code evaluation on

two of the generated codebases, right here is an excerpt of the problems that

had been discovered:

| Problem | Severity | Sonar tags | Notes |

|---|---|---|---|

| Change this utilization of ‘Stream.accumulate(Collectors.toList())’ with ‘Stream.toList()’ and be sure that the checklist is unmodified. | Main | java16 | From Sonar’s “Why”: The important thing drawback is that .accumulate(Collectors.toList()) truly returns a mutable form of Listing whereas within the majority of instances unmodifiable lists are most well-liked. |

| Merge this if assertion with the enclosing one. | Main | clumsy | Basically, we noticed lots of ifs and nested ifs within the generated code, particularly in mapping and validation code. On a facet observe, we additionally noticed lots of null checks with `if` as a substitute of using `Non-obligatory`. |

| Take away this unused technique parameter “occasion”. | Main | cert, unused | From Sonar’s “Why”: A typical code scent referred to as unused operate parameters refers to parameters declared in a operate however not used wherever inside the operate’s physique. Whereas this might sound innocent at first look, it may result in confusion and potential errors in your code. |

| Full the duty related to this TODO remark. | Data | AI left TODOs within the code, e.g. “// TODO: This might be populated by becoming a member of with lead entity or separate service calls. For now, we’ll go away it null – it may be populated by the service layer” | |

| Outline a relentless as a substitute of duplicating this literal (…) 10 instances. | Essential | design | From Sonar’s “Why”: Duplicated string literals make the method of refactoring complicated and error-prone, as any change would have to be propagated on all occurrences. |

| Name transactional strategies by way of an injected dependency as a substitute of immediately by way of ‘this’. | Essential | From Sonar’s “Why”: A way annotated with Spring’s @Async, @Cacheable or @Transactional annotations won’t work as anticipated if invoked immediately from inside its class. |

I might argue that every one of those points are related observations that result in

tougher and riskier maintainability, even in a world the place AI does all of the

upkeep.

Attainable mitigation

It’s after all attainable so as to add an agent to the workflow that appears on the

points and fixes them one after the other. Nonetheless, I do know from the actual world that not

all of them are related in each context, and groups usually intentionally mark

points as “will not repair”. So there may be nonetheless some nuance