Massive language fashions (LLMs) excel at utilizing textual reasoning to know the context of a doc and supply a logical reply about its contents. However these identical LLMs usually wrestle to accurately reply even the best math issues.

Textual reasoning is normally a less-than-ideal approach to deliberate over computational or algorithmic duties. Whereas some LLMs can generate code like Python to deal with symbolic queries, the fashions don’t at all times know when to make use of code, or what sort of code would work finest.

LLMs, it appears, may have a coach to steer them towards one of the best method.

Enter CodeSteer, a sensible assistant developed by MIT researchers that guides an LLM to change between code and textual content era till it accurately solutions a question.

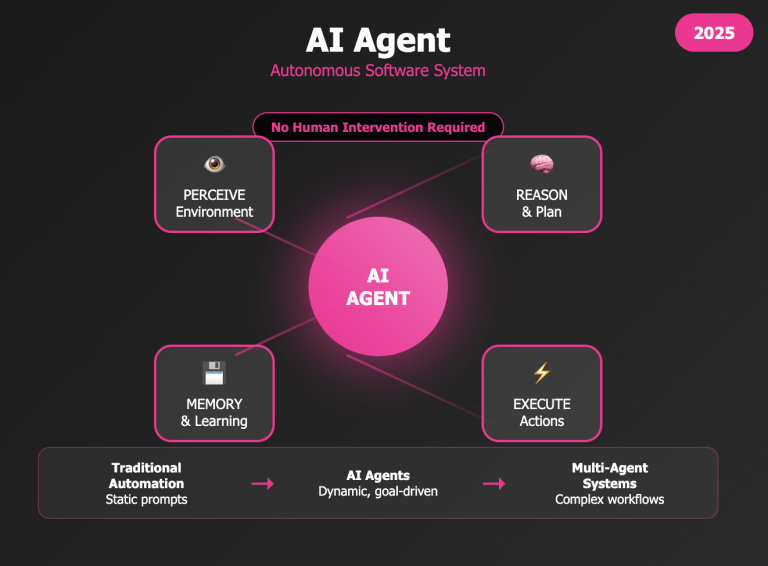

CodeSteer, itself a smaller LLM, routinely generates a sequence of prompts to iteratively steer a bigger LLM. It opinions the mannequin’s present and former solutions after every spherical and offers steerage for the way it can repair or refine that resolution till it deems the reply is right.

The researchers discovered that augmenting a bigger LLM with CodeSteer boosted its accuracy on symbolic duties, like multiplying numbers, enjoying Sudoku, and stacking blocks, by greater than 30 %. It additionally enabled much less refined fashions to outperform extra superior fashions with enhanced reasoning abilities.

This advance may enhance the problem-solving capabilities of LLMs for complicated duties which are particularly tough to unravel with textual reasoning alone, equivalent to producing paths for robots in unsure environments or scheduling shipments in a world provide chain.

“There’s a race to develop higher and higher fashions which are able to doing every part, however we’ve taken a complementary method. Researchers have spent years growing efficient applied sciences and instruments to deal with issues in lots of domains. We need to allow LLMs to pick out the fitting instruments and strategies, and make use of others’ experience to boost their very own capabilities,” says Chuchu Fan, an affiliate professor of aeronautics and astronautics (AeroAstro) and principal investigator within the MIT Laboratory for Data and Determination Programs (LIDS).

Fan, the senior creator of the research, is joined on a paper concerning the work by LIDS graduate pupil Yongchao Chen; AeroAstro graduate pupil Yilun Hao; College of Illinois at Urbana-Champaign graduate pupil Yueying Liu; and MIT-IBM Watson AI Lab Analysis Scientist Yang Zhang. The analysis can be offered on the Worldwide Convention on Machine Studying.

An LLM “coach”

Ask an LLM which quantity is larger, 9.11 or 9.9, and it’ll usually give the unsuitable reply by utilizing textual reasoning. However ask it to make use of code to reply the identical query, and it might generate and execute a Python script to match the 2 numbers, simply fixing the issue.

Initially skilled to know and predict human language, LLMs usually tend to reply queries utilizing textual content, even when code can be more practical. And whereas they’ve realized to generate code by way of fine-tuning, these fashions usually generate an incorrect or much less environment friendly model of the code.

Slightly than attempting to retrain a robust LLM like GPT-4 or Claude to enhance these capabilities, the MIT researchers fine-tune a smaller, light-weight LLM to information a bigger mannequin between textual content and code. Fantastic-tuning a smaller mannequin doesn’t change the bigger LLM, so there isn’t any threat it could undermine the bigger mannequin’s different talents.

“We have been additionally impressed by people. In sports activities, a coach is probably not higher than the star athlete on the crew, however the coach can nonetheless give useful recommendations to information the athlete. This steering methodology works for LLMs, too,” Chen says.

This coach, CodeSteer, works together with the bigger LLM. It first opinions a question and determines whether or not textual content or code is appropriate for this drawback, and which type of code can be finest.

Then it generates a immediate for the bigger LLM, telling it to make use of a coding methodology or textual reasoning to reply the question. The bigger mannequin follows this immediate to reply the question and sends the consequence again to CodeSteer, which opinions it.

If the reply is just not right, CodeSteer will proceed prompting the LLM to attempt various things that may repair the issue, equivalent to incorporating a search algorithm or constraint into its Python code, till the reply is right.

“We discovered that oftentimes, the bigger LLM will attempt to be lazy and use a shorter, much less environment friendly code that won’t carry the right symbolic calculation. We’ve designed CodeSteer to keep away from this phenomenon,” Chen says.

A symbolic checker evaluates the code’s complexity and sends a sign to CodeSteer whether it is too easy or inefficient. The researchers additionally incorporate a self-answer checker into CodeSteer, which prompts the LLM to generate code that calculates the reply to confirm it’s right.

Tackling complicated duties

Because the researchers designed CodeSteer, they couldn’t discover appropriate symbolic datasets to fine-tune and take a look at the mannequin, since many current benchmarks don’t level out whether or not a sure question may very well be finest solved with textual content or code.

So, they gathered a corpus of 37 complicated symbolic duties, together with spatial reasoning, arithmetic, order reasoning, and optimization, and constructed their very own dataset, referred to as SymBench. They carried out a fine-tuning method that leverages SymBench to maximise the efficiency of CodeSteer.

Of their experiments, CodeSteer outperformed all 9 baseline strategies they evaluated and boosted common accuracy from 53.3 % to 86.4 %. It maintains comparable efficiency even on unseen duties, and on a wide range of LLMs.

As well as, a general-purpose mannequin augmented with CodeSteer can obtain greater accuracy than state-of-the-art fashions designed to deal with complicated reasoning and planning, whereas requiring a lot much less computation.

“Our methodology makes use of an LLM’s personal capabilities. By augmenting an LLM with the power to neatly use coding, we are able to take a mannequin that’s already very robust and enhance its efficiency much more,” Chen says.

Sooner or later, the researchers need to streamline CodeSteer to hurry up its iterative prompting course of. As well as, they’re finding out how you can successfully fine-tune a unified mannequin with the power to change between textual reasoning and code era, somewhat than counting on a separate assistant.

“The authors current a chic resolution to the essential problem of software utilization in LLMs. This easy but impactful methodology permits state-of-the-art LLMs to attain vital efficiency enhancements with out requiring direct fine-tuning,” says Jinsung Yoon, a employees analysis scientist at Google Cloud AI, who was not concerned with this work. “This analysis represents a considerable contribution that guarantees to considerably improve the applying of LLMs to a various vary of duties with which they presently wrestle.”

“Their success in coaching a smaller, specialised mannequin to strategically information bigger, superior fashions is especially impactful,” provides Chi Wang, a senior employees scientist at Google DeepMind who was not concerned with this work. “This clever collaboration amongst numerous AI ‘brokers’ paves the way in which for extra sturdy and versatile purposes in complicated real-world eventualities.”

This analysis is supported, partly, by the U.S. Workplace of Naval Analysis and the MIT-IBM Watson AI Lab.